You work differently now

Your applications need to work differently, too

With Low-Code NOW YOU CAN

Featured

Customers

How We Help Our Clients

Automate

Build streamlined, comprehensive workflows that eliminate errors, reduce stress, and accelerate the pace of business.

Innovate

Identify trends, bring ideas to life, and scale effortlessly to increase profitability as well as customer and employee satisfaction.

Integrate

Bring together information from customers, suppliers, employees, and operations for deeper insight, better communication, and higher satisfaction.

Experience

Create unique and memorable experiences to delight customers, strengthen partner relationships, and increase employee loyalty.

Low-Code by the sprint.

Rapid and measurable results.

The ultimate

results-driven model.

Low-Code by the sprint.

Rapid and measurable results.

The ultimate results-driven model.

Tresbu Digital is proud to be...

a Mendix Solution Partner. Mendix, a part of Siemens, is the fast, easy way to build, integrate, and extend applications:

- Build apps 10x faster with 70% fewer resources

- Unleash domain experts while IT stays in control

- Unlock and extend your data systems

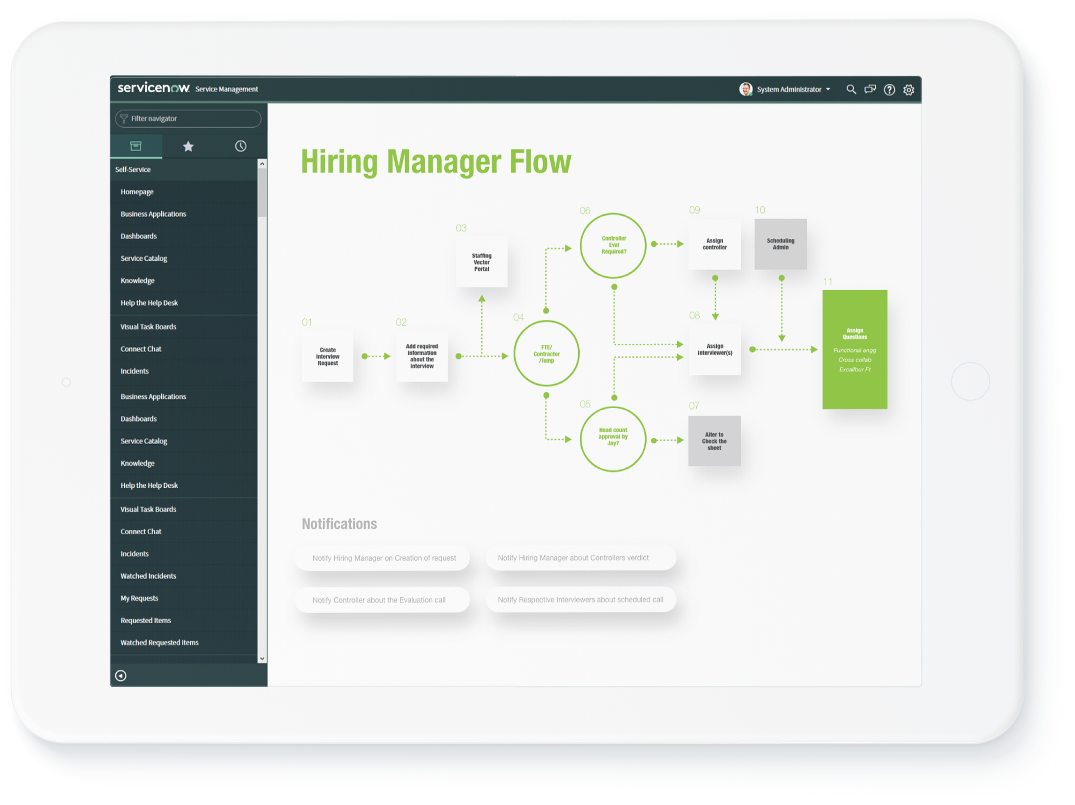

ServiceNow Development

We view the ServiceNow platform as the most efficient way to automate ITSM and ITOM to power business transformation.

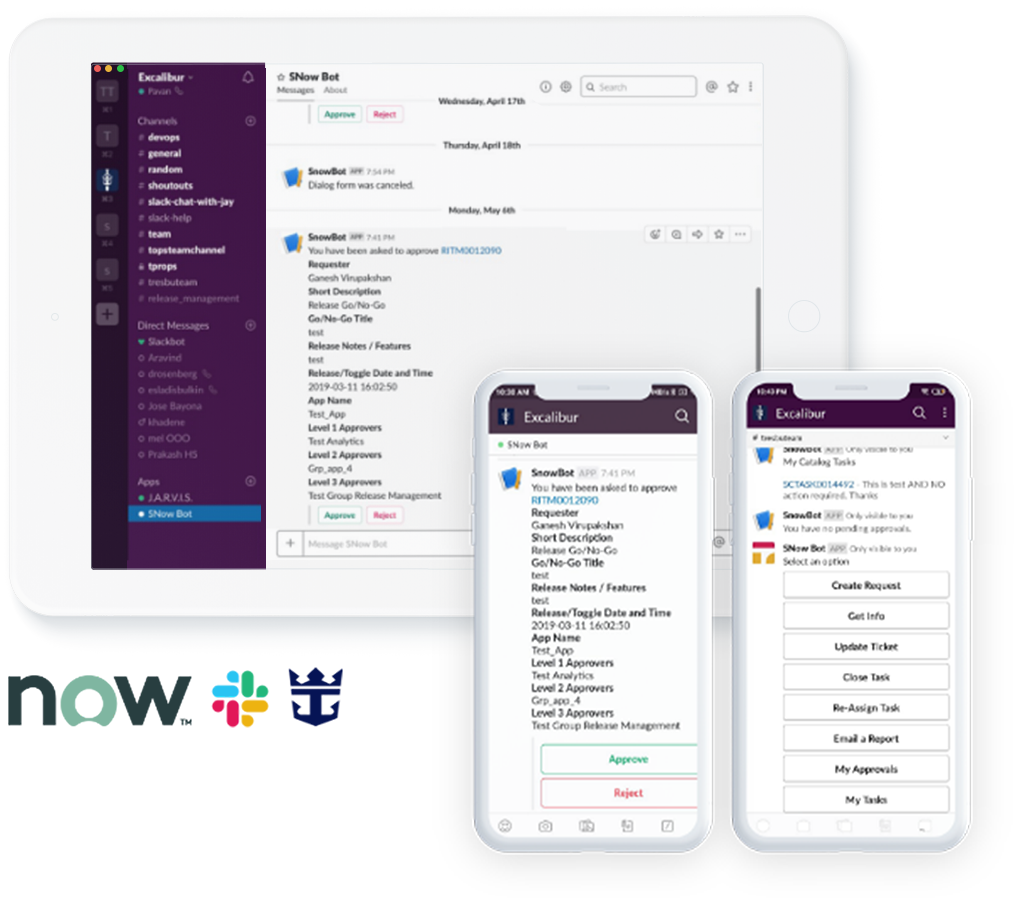

Royal Caribbean

Slackbot Actions

Integrate ServiceNow and Slack to automate approval processes and record all data in ServiceNow for auditing, while eliminating the need for multiple logins to infrequently used systems.

© 2021 Tresbu Digital, Inc. | Privacy Policy